Scaling a push notification service in the cloud

Fast Rhymes is a songwriting app for iOS & Android, built with React Native & Firebase (Google Cloud). The push notification service was built to be as simple as possible, and was working great for a while. However, since the user base has grown to around 300K users, with push tokens from 150K users, it's become unusable at it's current scale. Building a robust backend architecture for sending push notifications that scales with your users can be tricky, because managed cloud services have their limits.

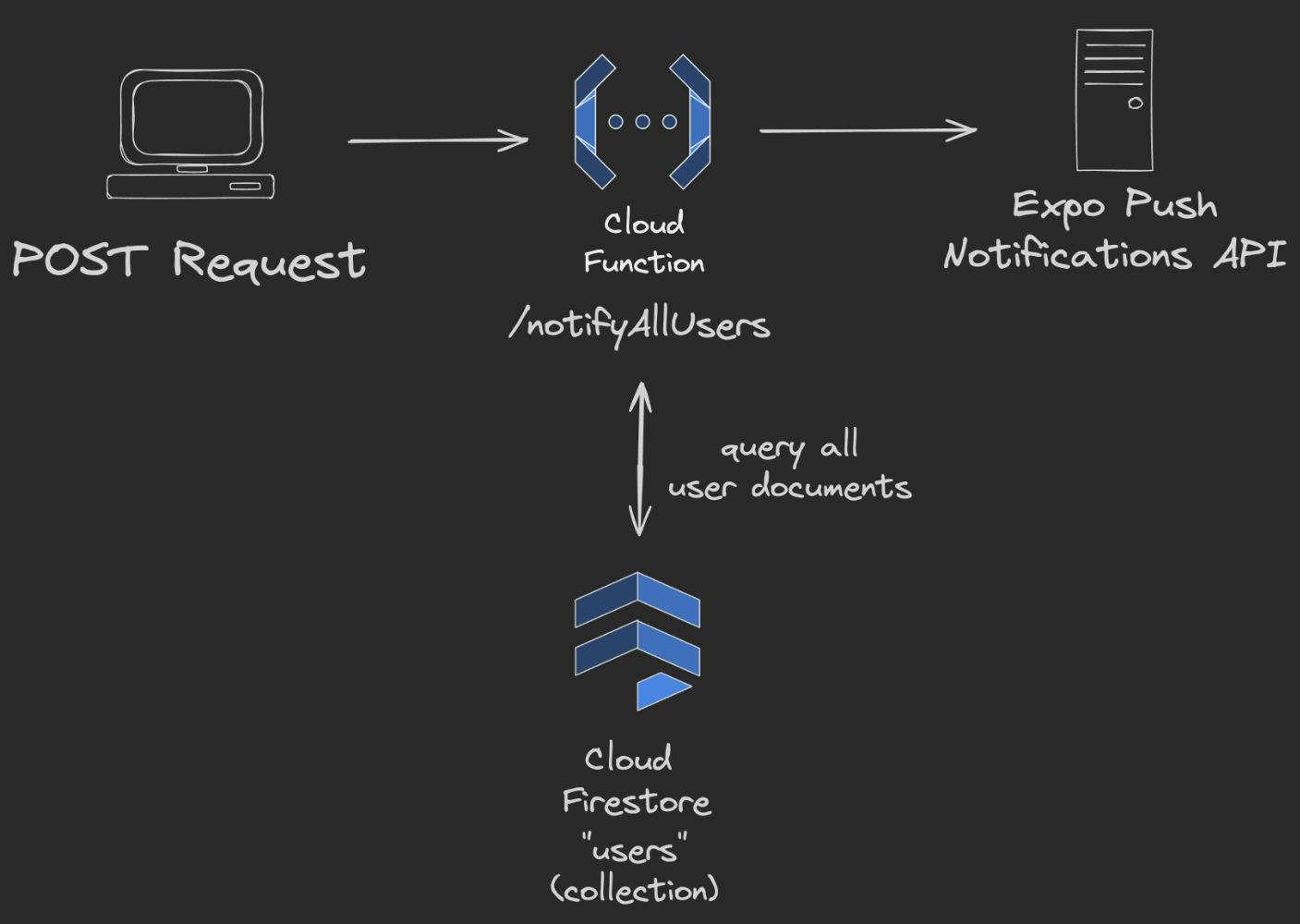

The Old Architecture

The initial push notification service was a Firebase Cloud Function that queried all the user documents where the “pushToken” field was “not null” from Firestore. Then created the push message objects, batched the notification messages per 1000 message, and sent to the Expo push notification service, which forwards this to Apple and Google's push services. The push notification tickets are then handled, so push tokens that are unregistered are removed from Firestore. Sending a push notification to all users was as simple as hitting one endpoint.

However, this wasn't optimal for many reasons. This was there is no history for which push notifications were sent (they are not stored anywhere), a non technical person would probably not like to have to deal with an API to send push messages. And everything was handled by a singe Cloud Function, which would not scale from having to query too many documents, run out of memory, or run out of the maximum 9 minutes a Firebase Cloud Function can run for.

The bottleneck

This method works great for smaller applications, and it did work great for a while for Fast Rhymes as well. But, now that there are 150K user documents with push tokens stored in Firebase, it doesn't. The Cloud Function times out at the maximum of 9 minutes it can run for. Which is to be expected. Still, the code is super useful and can be reused. The service just needs to be rearchitected.

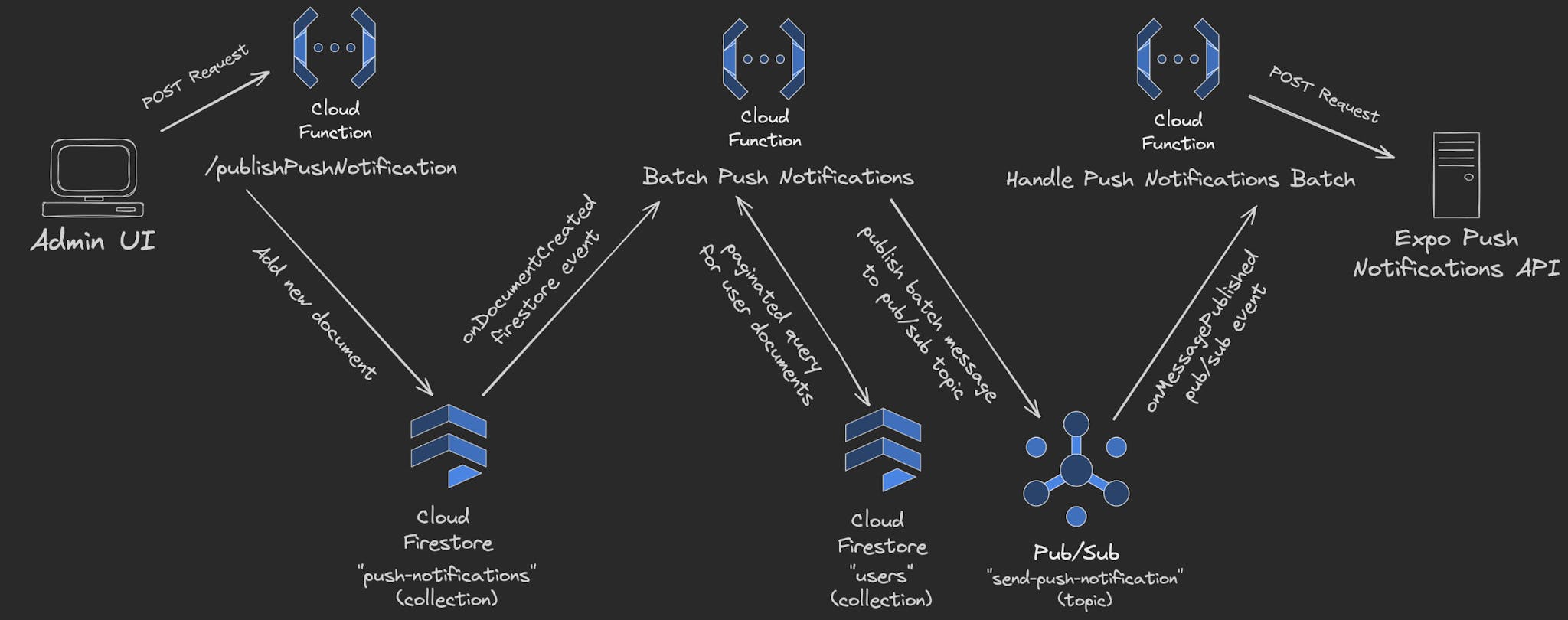

The New Architecture

When rearchitecting this service there was a couple of important features to build towards. Ease of use, push notification history, and the possibility to segment the audience in the future. Other than actually being to send push notifications to our users, which was the main goal. The first was solved by adding a couple pages in the Next.js application that were protected by Firebase Auth and only allowing specific users access. One page was a simple dashboard to show the sent push notifications, and another to send new push notifications.

The push notification history was achieved by architecting the flow around a Firestore “onDocumentCreated” event. The Next.js application makes a POST request to the “/publishPushNotification” Cloud Function endpoint, through a server action to avoid exposing the API key to the client. This creates a new document in the “push-notifications” Firestore collection, and when a new document is added this kicks off the batching of the push notifications.

The “Batch Push Notifications” Cloud Function does a paginated query on user documents from Firestore with a batch size of 1000 documents. For each batch the push tokens are extracted from the user documents and the push messages are created. This data is then put into a buffer and published to the “send-push-notification” Pub/Sub topic.

The last Cloud Function, “Handle Push Notifications Batch” is triggered on a new Pub/Sub message published to the “send-push-notification” Pub/Sub topic. This Cloud Function takes the push data batch and sends it to the Expo Push Notifications API, which then forwards it to Apple and Google servers where the push notifications are sent to the users.

With this new architecture, the audience segmentation can easily be solved by adding additional optional fields to the push notification Firestore document. Then the batching function can query the user documents based on these criteria.

Demo

Conclusion

In conclusion, scaling a push notification service in the cloud can be tricky. As demonstrated, simple approaches may work well at a smaller scale but can run into issues as the userbase grows. It's also important to not end up overengineering, when the need isn't there. Reducing complexity when possible initially is a essential, so you can focus on building good user experiences. Building out a complex service that scales right from the start often ends up in tech debt, which holds the speed of the project back. It's a good idea to anticipate scaling challenges, in a way that is manageable to solve in the future when needed.

To learn more about Fast Rhymes, visit: fastrhymes.com

Andor Davoti - 31/01/2024